From Training RAs to Training Workflows

A Hidden Cost of AI-Assisted Research

Everyone talks about the speed gains from moving parts of a research workflow from human RAs to AI. Those gains are real. Literature reviews get faster. Code scaffolding gets faster. First drafts get faster. But I think a yet-unresolved difference is not speed. It is error identification and correction.

In an RA-based workflow, many mistakes are routine and familiar. A variable gets miscoded. A citation is dropped. A table is mislabeled. A robustness check is run on a weird sample. Those errors are frustrating, but they are often legible. Over time, a PI learns typical RA failure modes, and the RA learns the PI’s standards. Correction is not just repair; it is training. Fix a recurring mistake once or twice, and there is a decent chance the person will not make it again.

AI changes that bargain. Some AI errors are also routine. But many are stranger: fabricated citations, code that runs while quietly doing the wrong thing, summaries that sound polished while smuggling in a false premise. The fluency makes these errors harder to spot, not easier. And even when you do catch them, it is often unclear what exactly has been fixed. Did you correct the system, or did you just correct this one output?

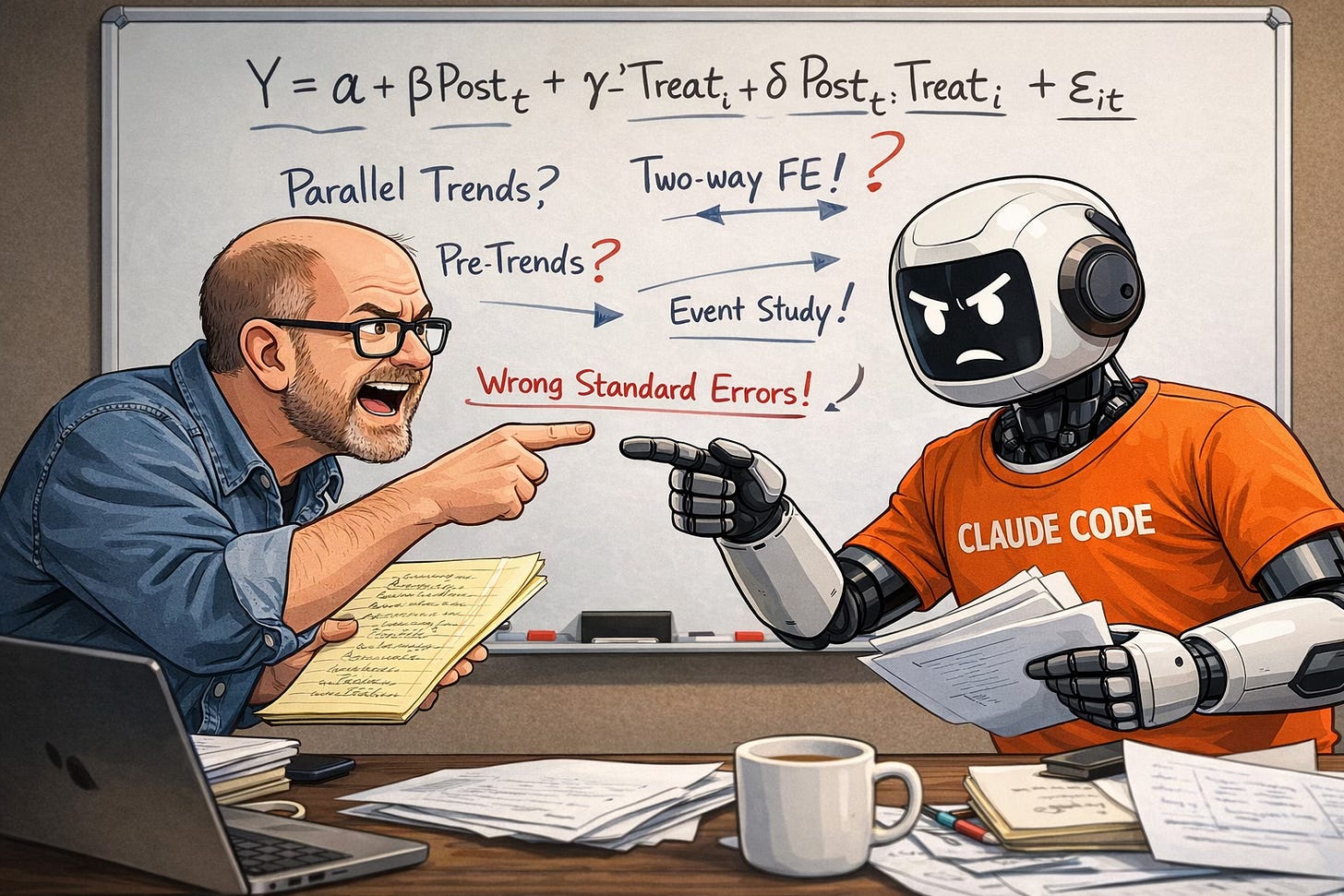

scott cunningham has a great example here where he had to engage in a battle with Claude to detect an important error.

Here is a reenactment of the scene:

In Scott’s case and in other cases, did he correct the system, or did he just correct this one output?

This question is an important one. A human RA, ideally, accumulates judgment. An AI system often behaves as if it forgets, because the correction was never written into anything durable. Unless the lesson gets encoded in the workflow — in prompts, templates, tests, retrieval, validators, or some other piece of infrastructure — the same error can return next week in slightly different clothing.

One analogy I keep coming back to is gene editing. A one-off correction to an AI output is a bit like a somatic edit: useful in the local case, but not automatically inherited by the next generation. A change to the workflow itself is closer to a germline edit: future outputs inherit it. In human genome editing, somatic edits generally affect only the treated individual, while germline edits can be heritable.

This seems easy enough to do with a single error, but (1) we don’t catch all errors and (2) do we want to spend our research lives updating our Claude skills error-by-error?

I’ve been thinking about this partly through conversations with Sendhil Mullainathan, who pushed me to focus less on whether AI can produce more and more on what kind of system we are actually building. That is also why I want to link to his Hilldale lecture, We’re Building the Wrong AI. The title gets at the issue exactly: the question is not just whether AI can generate output, but whether it helps us build research systems that accumulate understanding rather than just volume.

So I do not think the shift from RA-based work to AI-assisted work is only, or even mainly, about labor substitution. It is about moving from training people to training pipelines. In the old model, the PI’s supervisory investment could compound inside a person. In the new model, it only compounds if the lab learns how to build memory outside the model. That is a very different managerial problem. It may also turn out to be one of the central research-design problems of the AI era (right now)…

I believe Claude has a memory.md file that it autonomously updates to try to understand workflow and best practices. The problem you are highlight also goes beyond research workflows, my guess is that most major organizations use a lot of tacit knowledge built up in their workforce. I will also speculate that Anthropic is probably trying to figure out how to build larger memory contexts so that agents are learning more about how we work in each session. Thanks again for there posts!