Which AI?

Why researchers are talking past each other more than ever

A quiet shift is happening in research conversations.

Not a new method.

Not a new dataset.

Not even a new identification strategy.

A new question.

“Which AI?”

And increasingly, this is not a trivial clarification. It’s the question.

The Old World: Smooth Frontiers

For most of my career, research tools behaved in a reassuringly stable way.

If a method worked in case A, you could be reasonably confident it would work in case A′—as long as A′ was “close enough.” Small tweaks, small changes. The frontier was smooth.

Occasionally, when trying to overcome a weird problem, you’d hear:

“Are you on the latest version of Stata?”

“Did you update that diff-in-diff package?”

But those were not typical cases. They weren’t the main explanation for why something failed.

The New World: Jagged Frontiers

AI has changed this.

We’re now operating on what been called a jagged frontier.

Two tasks that look nearly identical can produce wildly different results:

One prompt works perfectly.

A slightly different phrasing collapses into nonsense.

One model nails the task.

Another fails in ways that seem almost comical.

This breaks a deeply ingrained heuristic in research:

“If it worked there, it should work here.”

With AI, that inference fails much more often than we expect.

Importantly, understanding the jagged frontier gives you a sense that you need to iterate to find the answer rather than assume the problem is really hard.

But There’s a Second, Bigger Shift

The jagged frontier is only half the story.

The bigger shift is that we’re no longer all using the same tool—even when we think we are.

And that’s where “Which AI?” becomes essential.

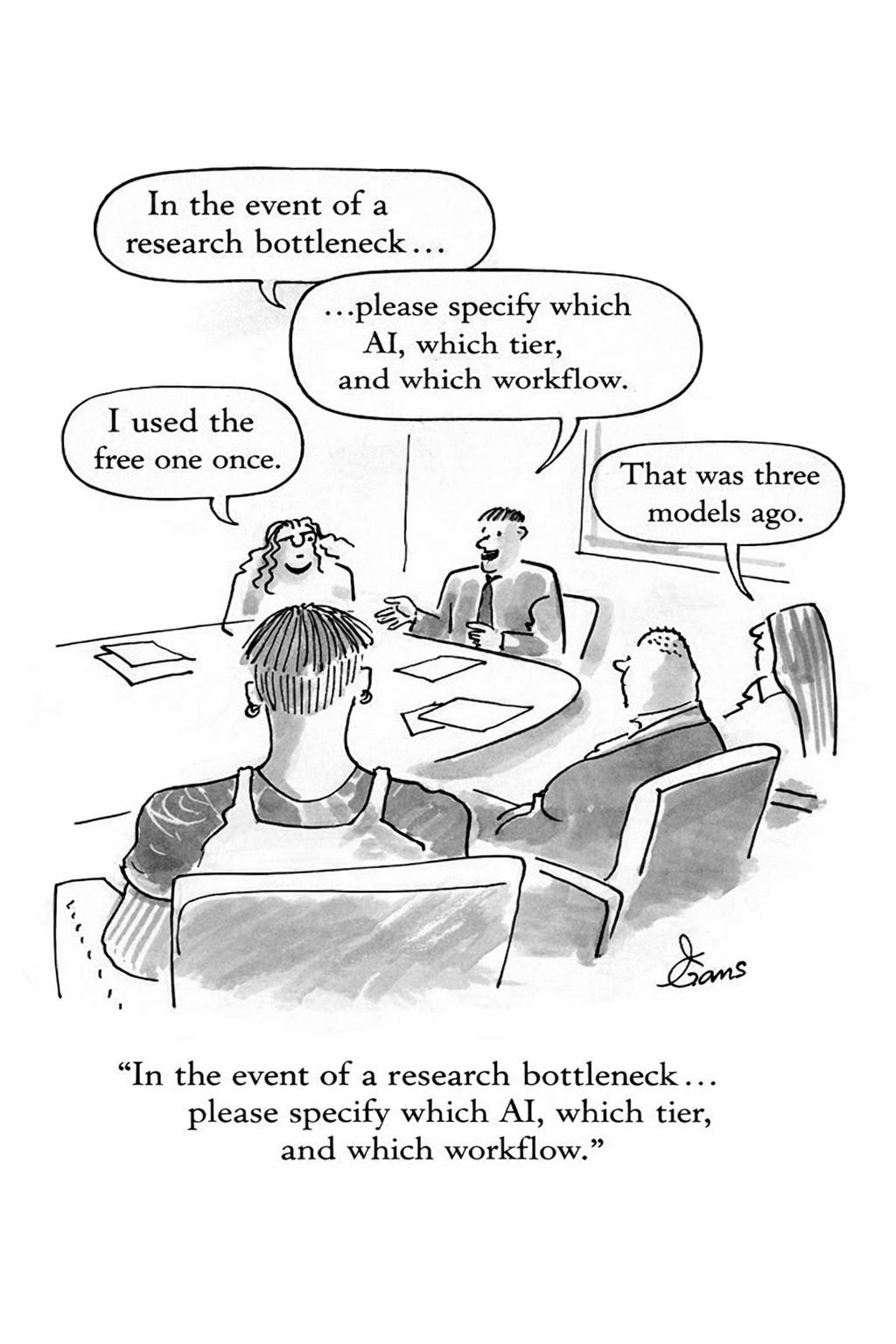

Case 1: The Dismissive Colleague

You’ve probably had this conversation:

“AI is useless. I tried it a few months ago. It can’t even add 3 + 4.”

This person is not irrational. They are reporting a real experience.

But there are at least three hidden variables:

Time

“A few months ago” is now a long time ago.

Capabilities shift meaningfully in weeks, not years.

Tier

Free vs $20 vs $200 is not a minor difference.

These are not different “versions”—they are different regimes.

Interface / defaults

What tool? What settings? What context length?

So when someone says “AI doesn’t work,” what they often mean is:

“The specific configuration of AI I used at a particular moment failed on a particular task.”

That’s a much narrower claim.

But it’s rarely interpreted that way. They think they are learning “Stata doesn’t work for this” but they are not.

Case 2: The Stuck Researcher

A more interesting case is the colleague who says:

“I tried using AI for literature reviews—it just doesn’t work.”

Again, often true in their setup.

But here’s what’s new:

The solution is frequently not:

a new dataset

a new method

or even more time

Instead, it’s something like:

breaking the task into stages

using a different model

adding retrieval or structure

or learning one additional “AI skill”

And suddenly:

The same task that “didn’t work” now works surprisingly well.

The Two Dimensions of “Which AI?”

When we ask “Which AI?”, we’re really asking two questions:

1. Which Tier?

Free

Subscription ($20)

High-tier / pro ($200+)

These differ in:

reasoning ability

context length

reliability

tool use

This is closer to switching from:

SPSS → Stata

…than upgrading from Stata 16 to Stata 17.

2. Which Skill Stack?

Equally important—and less visible—is:

What skills are being used with the AI?

Examples:

Prompt structuring

Decomposing tasks

Iterative refinement

Using it as a pipeline rather than a one-shot answer machine

Knowing when to switch models

These are not just “tips.”

They are closer to:

Learning regression vs learning IV vs learning diff-in-diff.

Why This Is So Unusual

A few years ago, it would have been strange if the answer to most research problems was:

“Have you upgraded your software?”

“Are you using the right version?”

Now, that answer is increasingly common.

In fact, it’s often the answer.

And this creates a new kind of confusion:

People assume they are using the same tool

They assume failures are fundamental

They don’t realize the solution may be simple and proximal

This is much closer to:

“You’re on the wrong version—upgrade and it works”

…than most researchers expect.

The Hidden Cost: Miscommunication

This leads to systematic miscommunication:

The skeptic thinks AI is overhyped

The enthusiast thinks others are underusing it

Both are correct—within their own reference point

But they’re talking about different objects.

A Practical Rule

Here’s a simple update to your research workflow:

Whenever AI comes up, ask “Which AI?” before forming a conclusion.

And be specific:

Which model?

Which tier?

Which workflow?

Which skills?

Without that, you’re often comparing:

apples to oranges to something that didn’t exist three months ago.

A Final Thought

We’ve spent decades training ourselves to think in terms of:

identification strategies

robustness

external validity

AI introduces a new layer:

“Which AI?” is now part of the method

And unlike most tools we’ve used, this one is:

rapidly evolving

highly heterogeneous

and unusually sensitive to how it’s used

Which means that a growing share of research disagreements may not be about ideas at all.

They may start with a much simpler question:

Which AI are you talking about?